The Signal We Keep Compressing Away

On automated harness optimization and what it means for clinical evidence

There is a principle in pharmacology that took medicine decades to fully absorb. Liposomal doxorubicin and free doxorubicin are the same molecule. Yet one accumulates preferentially in tumors while the other damages the heart. The liposome does not make the drug more potent. It changes where the drug goes, what it encounters, what it spares; by exploiting the leaky vasculature of the tumor microenvironment and staying encapsulated in the tighter junctions of cardiac tissue. The container shapes what the active compound can do.

The same principle, it turns out, applies to AI systems. And a paper published at the end of March 2026 by researchers at Stanford, MIT, and KRAFTON gives us the clearest demonstration yet of what that means in practice and what it makes possible.

What the paper found

The paper is called Meta-Harness: End-to-End Optimization of Model Harnesses. Its subject is the code that wraps a large language model, the layer that determines what information the model sees, in what order, retrieved from where, and framed how. The authors call this the harness. It encompasses three things: memory, meaning what the system retains between interactions; retrieval, meaning what it fetches from external sources at each decision point; and prompting, meaning how context is assembled and sequenced before the model reasons about it.

The central observation is straightforward. Research cited by the authors has shown that changing only the harness around a frozen, untouched model can shift performance by a factor of six on the same benchmark. Same weights. Different wrapper. Until now, harnesses have been built entirely by hand: engineers designing prompting frameworks, retrieval strategies, and memory structures through iteration and intuition, exploring a small fraction of what is possible.

Meta-Harness automates this process. It works through a recursive loop. A candidate harness is proposed, evaluated against a set of tasks, and everything is saved in raw, uncompressed form, not a score, not a summary, but the full transcript of every prompt the model received, every response it gave, every tool call it made. A coding agent then navigates that filesystem, reads what it needs, and proposes an improved harness. The loop repeats roughly sixty times in a full run.

What distinguishes this from every prior approach to automated optimization is the refusal to compress. Systems like OPRO, TextGrad, and AlphaEvolve hand the optimizer a digest: a scalar score, a short critique, a recent snippet. Meta-Harness gives the agent the raw logs and trusts it to navigate selectively, reading a median of 82 files per iteration across the full history of prior attempts. That access to raw diagnostic history, rather than compressed summaries, turns out to be the key design decision.

The results across three domains are real and substantial. On a text classification task where a model updates its memory from labeled examples and is evaluated on held-out cases, the discovered harness outperforms the best hand-designed system by 7.7 accuracy points while using four times fewer tokens. On 200 olympiad-level mathematics problems, a single harness discovered during search improves accuracy by an average of 4.7 points across five AI models never seen during the search process, indicating the discovered strategy is genuinely general. On TerminalBench-2, a benchmark of 89 real-world tasks requiring AI agents to autonomously compile code, debug systems, and complete multi-step terminal workflows with full automated verification, the discovered harness places first among all agents running on the same underlying model, surpassing every hand-engineered competitor.

But what the paper demonstrates is not just that automated harness search works. It is that the agent doing the searching behaves in ways that matter for how we think about these systems going forward.

The agent that reads its own case files

The paper’s appendix traces, iteration by iteration, how the agent reasoned through a live search run on TerminalBench-2, starting from a strong hand-engineered baseline. What emerges is not a description of brute-force search. It is a description of systematic diagnosis.

In the first two iterations, the agent bundled a structural bugfix with a rewritten prompt template. Both regressed from the baseline. In the third iteration, rather than proposing a third fix, the agent looked back across both failures. It noticed that while the two attempts differed in their structural changes, they shared one intervention: the prompt rewrite. It separated the confounded variables and wrote verbatim into its log: “the structural bugfixes were confounded with harmful prompt changes.”

This is abductive reasoning, the same logic a careful physician applies when two patients on similar regimens both deteriorate. You do not conclude the drug failed. You ask what they had in common. You isolate. You test. The agent was performing differential diagnosis on its own previous attempts, using the filesystem as its case history and the execution traces as its clinical notes. It could only do this because it had access to the raw logs. A compressed summary would have hidden the shared intervention entirely.

By the seventh iteration, after six consecutive regressions that taught the agent where the system’s fragility lived, it stopped modifying the existing machinery and instead added something before the loop began: a snapshot of the computational environment injected into the initial prompt. Its recorded reasoning: avoid touching the previously fragile components. It had learned not just what failed but why and used that understanding to reason about risk.

This behavior (reading raw history, identifying confounds, forming causal hypotheses, reasoning about where fragility lives) is what full access to prior execution traces enables. Compressed feedback cannot support it. And it is precisely this kind of reasoning that the hand-engineering of clinical AI harnesses has always depended on human experts to provide.

The compression problem, demonstrated

To understand why this matters for biomedicine specifically, it helps to look at what happens when LLM harnesses are hand-engineered in a clinical setting.

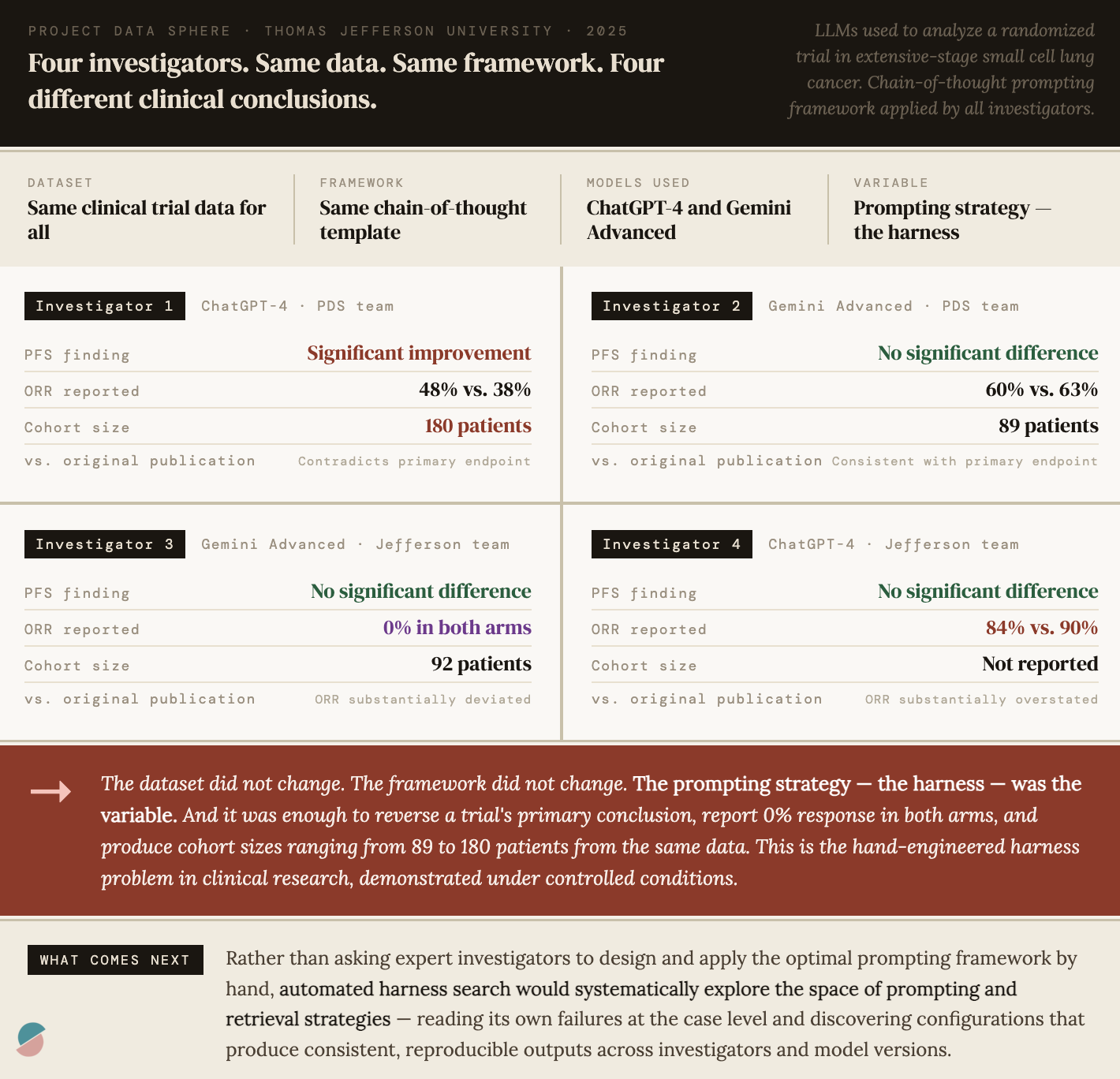

In a study we conducted last year at Project Data Sphere with Thomas Jefferson University, four independent investigators used LLMs (ChatGPT-4 and Gemini Advanced) to analyze data from a clinical trial evaluating LY2510924 combined with carboplatin and etoposide for extensive-stage small cell lung cancer. All four investigators worked from the same dataset, the same chain-of-thought prompting framework, and the same analytical objectives.

The results were interesting but inconsistent. One investigator found a statistically significant improvement in progression-free survival with the addition of LY2510924, contradicting the original publication and the other three investigators. Another reported an objective response rate that deviated substantially from every other analysis. Cohort sizes ranged from 89 to 180 participants from the same dataset, suggesting the LLMs characterized entirely different patient populations depending on how the prompts were constructed. The conclusion of the paper was clear: variability in prompting strategies drove variability in clinical conclusions, and highly trained subject matter experts remain essential to review and interpret LLM-generated analyses.

This is the harness problem in clinical research, demonstrated under controlled conditions. The chain-of-thought framework was the harness. It was hand-engineered. Different investigators interpreted it differently. The model was sensitive to subtle prompt variations. And the outputs, including whether a drug appeared to improve survival, changed accordingly.

Meta-Harness is a direct response to exactly this problem. Rather than asking human experts to design the optimal prompting framework and then hoping investigators apply it consistently, automated harness search would systematically explore the space of prompting and retrieval strategies, read its own failures at the case level, this analysis got PFS wrong, what was it shown, what did it retrieve, what framing led it astray, and discover configurations that produce consistent, reproducible outputs across investigators, model versions, and datasets. The subject matter expert moves from designing the wrapper to evaluating and validating what the automated search discovers. That is a meaningful and practical shift in how we deploy LLM-based clinical tools.

What the 40% tells us

A drug achieves a 40% ORR in a clinical trial. We call this a success. The drug is approved, deployed, and prescribed. But 60% of patients who receive it do not respond. They experience toxicity without benefit, akin to taking a toxic placebo.

Those 60% non-responders are not a rounding error. They are a population. And somewhere in the data we already have; genomic profiles, imaging series, early response signals, treatment histories, patterns of toxicity; there may be information that distinguishes them from the 40% who responded. The challenge is not always that this signal does not exist. The challenge is often that we do not know where in the vast space of available data to look for it, and that the LLM-based clinical tools we are deploying are wrapped in harnesses that were never designed to find it.

This is where automated harness search becomes directly relevant to patient care. An LLM-based decision support tool wrapping a clinical dataset will only surface the signal that its retrieval and context construction strategy is designed to find. If that strategy is hand-engineered, it reflects the intuitions of the engineers who built it. Automated harness search would systematically explore alternative retrieval policies: what patient-level data to pull, how to frame prior cases, what early signals to foreground; reading its own failures at the case level and discovering configurations that surface signal the hand-engineered wrapper missed.

Where this applies today

Countless LLM-based tools are being deployed across biomedicine right now, each with a hand-engineered harness, each leaving performance on the table in ways that automated search could address. A few meta-harness insipred opportunities to highlight:

Clinical trial analysis. Our study demonstrated this problem directly. LLM-based systems for analyzing trial data are sensitive to prompting strategies in ways that produce clinically meaningful variation in outputs. Automated harness search could discover prompting and retrieval configurations that produce consistent results across investigators and model versions, reducing the burden on subject matter experts to catch errors and standardizing the analytical process across institutions.

Clinical decision support. LLM-based systems are increasingly used to synthesize patient records, surface relevant literature, and support diagnostic and treatment decisions. The harness determines what gets retrieved from the electronic health record, how prior similar cases are identified and presented, how uncertainty is communicated to the clinician. These are wrapper decisions. They are currently made by hand, reflecting the intuitions of the development team. Automated search over these decisions, using case-level outcomes as the feedback signal, could discover retrieval and framing strategies that improve decision quality in ways that no individual engineer or clinician would have designed.

Drug development workflows. LLM-based tools are being used to synthesize preclinical literature, generate hypotheses about mechanism, and support the design of clinical development programs. The harness determines how prior experimental results are retrieved and contextualized, how mechanistic hypotheses are framed, what evidence is foregrounded when the model reasons about a new compound. Automated harness search could discover context construction strategies that improve the quality and consistency of these outputs, not by changing the model, but by changing what it sees.

Biomarker and multi-omics analysis. When LLM-based systems reason over complex biological data, integrating genomic profiles, imaging features, proteomic signatures, and clinical records, the harness determines what gets retrieved, how it is integrated, what gets foregrounded for each patient. The design space here is enormous. Automated search over retrieval and integration strategies, reading its own failures at the case level, could discover configurations that surface signal in high-dimensional biological data that hand-engineering would not find.

Regulatory and evidence synthesis. LLM-based tools are being used to synthesize clinical evidence for regulatory submissions, systematic reviews, and health technology assessments. The harness determines how evidence is retrieved, how studies are compared and contextualized, how uncertainty and heterogeneity are communicated. Inconsistencies in these outputs, analogous to those demonstrated in our study, have direct consequences for regulatory decisions. Automated harness optimization offers a path toward more consistent, reproducible evidence synthesis.

What changes for experts

None of this necessarily replaces clinical or scientific expertise. It relocates where that expertise is most valuable.

Today, expert knowledge goes into designing the harness, crafting the prompting framework, specifying the retrieval strategy, determining what context the model sees. This is painstaking, iterative, and bounded by individual intuition.

With automated harness search, expert knowledge moves to a different layer. Experts define the objective: what does a good clinical trial analysis look like, what does a useful decision support output look like, what constitutes a failure worth correcting. They evaluate and validate what the automated search discovers. They set the boundaries of what the system is permitted to do. They interpret the discovered harness and decide whether it reflects genuine clinical reasoning or a brittle artifact of the search process.

This, I believe, is a more appropriate deployment of expertise in the age of AI. The combinatorial space of possible harness configurations is too large for human intuition to explore systematically. Automated search is better at that. The evaluation of whether a discovered configuration is clinically sound, generalizable, and safe is a judgment that requires deep domain knowledge. Experts are better at that.

The compression principle

Running through all of this is a single epistemological claim that the Meta-Harness paper demonstrates more cleanly than any prior work: compressed feedback is lossy feedback, and lossy feedback leads to lossy learning.

In the paper’s controlled ablation, the system with access to raw execution traces achieves 50% median accuracy on a text classification task. The identical system with access only to scores and LLM-generated summaries achieves ~35%. Fifteen points separate a system reasoning from raw evidence and a system reasoning from digests of that evidence. That gap is the measured cost of compression, of replacing the signal with a summary and then trying to reason from the summary as if nothing had been lost.

In medicine, we compress constantly. Individual patient trajectories become survival curves. Heterogeneous treatment responses become pooled response rates. The richness of what happened to each person in a trial becomes a table entry and, eventually, a label (responder or non-responder) that discards the nuances of the outcome and everything that led to it. And here precision matters: our prized p-value is a downstream artifact, a summary of a summary. The compression that happens earlier, in the decision to aggregate heterogeneous individual trajectories into a single outcome distribution, can make the p-value meaningless. Once that aggregation is made, the p-value reports on what remains after the flattening.

We compress because reasoning over raw trajectories at scale has been historically intractable. That constraint is loosening today. What Meta-Harness demonstrates is that a system given access to raw case-level evidence can discover things that a system given only summaries cannot and that the difference is not marginal. In clinical AI, where the heterogeneity is biological and the stakes are patient outcomes, building systems that reason from richer, less compressed evidence is not just a technical improvement. It is a different epistemological commitment, one that we are now, for the first time, practically positioned to make.

The recursion

Meta-Harness is itself a harness. The coding agent that proposes new harnesses is wrapped in a skill document defining its role, its constraints, its objective. That document is, in principle, subject to optimization too. What happens when the outer loop optimizes not just the inner harness but the proposer’s own search procedure? The loop accelerates. The engineer becomes, progressively, a curator.

This is not a distant prospect. It is the obvious next experiment given the capabilities that exist today. And it implies a trajectory in which the performance ceiling of LLM-based systems is no longer bounded by the bandwidth of the engineers designing their wrappers but by the quality of the feedback signal and the clarity of the objective.

That means the most important design decisions going forward are not which model to use or how large to make it. They are: what does success look like at the case level, how do we measure it, and how do we build the feedback infrastructure that lets automated search find the wrapper configurations that achieve it. In biomedicine, those are questions that require clinical expertise, regulatory judgment, and scientific rigor. They are also questions that, once answered well, unlock a new generation of LLM-based tools that are more consistent, more generalizable, and more useful than anything hand-engineering alone can produce.

Like liposomal doxorubicin, the harness changes what the active compound can do. Unlike the drug, it can redesign itself. And unlike the chemist, it never stops iterating.